In this blog series we explore how to use distributed cache architecture to improve application performance and scalability.

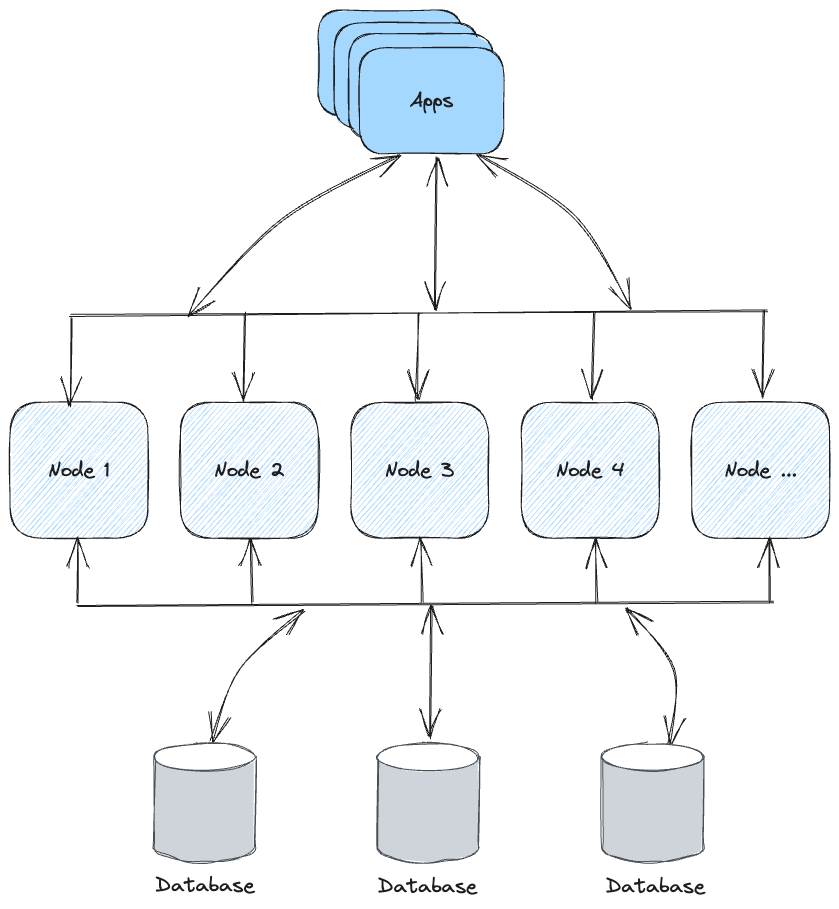

In the world of modern software development, performance and scalability are crucial factors. One technology that has gained significant traction in addressing these concerns is distributed caching. Distributed caching is a technique that involves storing frequently accessed data in-memory across multiple nodes or servers to accelerate data retrieval and enhance application responsiveness.

A client approached us seeking assistance in enhancing the performance of their ML pipeline. Each execution run was consuming hours, leading to substantial repercussions for their business operations. Moreover, this prolonged execution was adversely affecting other components of their application infrastructure.

Upon investigation, it became evident that the client was repeatedly executing the same query, despite yielding identical results on each occasion. Through the implementation of a distributed cache, we were able to achieve a significant reduction in performance overhead. As part of our proof of concept (PoC), we developed a custom predicate within a Hazelcast cluster. This solution enabled us to execute a complex query on a dataset of 15 million rows in sub-seconds, in strong contrast to the minutes for executing against a database.

Advantages of Using Distributed Cache

- Improved performance through memory-first reads

- Reduced load on primary databases

- Better horizontal scalability under traffic growth

- Lower latency in distributed systems

- Flexible cache strategies (TTL, LRU, eviction policies)

1. Improved Performance

One of the primary benefits of using distributed caching is the dramatic improvement in application performance. Since cached data is stored in-memory, retrieval is significantly faster compared to disk storage or databases. This results in quicker response times and a smoother user experience.

2. Reduced Database Load

By offloading frequently accessed data from the database to cache, you can significantly reduce load on your database server. This improves overall performance and helps prevent bottlenecks during peak usage.

3. Scalability

Distributed caching systems are designed to scale horizontally. As traffic and data load increase, additional cache nodes can be added to distribute load while maintaining performance.

4. Latency Reduction

Distributed caches are often deployed close to application services, reducing network latency. This is particularly useful in distributed and microservices architectures.

5. Caching Strategy Flexibility

Distributed cache allows implementation of multiple strategies such as LRU, TTL, and custom eviction policies, enabling fine tuning for specific use cases.

Disadvantages of Using Distributed Cache

- Data consistency complexity across nodes

- Additional operational overhead

- Challenging cache invalidation design

- Memory management risks under heavy load

- Potential platform cost increase

1. Data Consistency

One of the biggest challenges is maintaining consistency across cache nodes. If not handled properly, cached data can become stale or inconsistent, leading to incorrect results.

2. Complexity and Overhead

Adding distributed caching introduces another infrastructure layer that must be designed, implemented, and maintained, increasing operational complexity.

3. Cache Invalidation

Ensuring cached data is updated when source data changes is difficult and requires careful invalidation strategy design.

4. Memory Management

Because cache relies on memory, poor memory management can lead to pressure and instability in the overall platform.

5. Costs

Although open-source options are available, enterprise-grade or managed cache solutions can introduce additional cost that must be balanced against performance gains.

Conclusion

Distributed caching can deliver substantial improvements in application performance, reduce database load, and improve scalability. Its ability to provide low-latency data retrieval makes it attractive for responsive, high-throughput systems.

At the same time, the trade-offs should not be underestimated: consistency management, added complexity, invalidation challenges, and cost.

The right decision depends on workload characteristics, data consistency requirements, and operational maturity. A well-designed caching strategy, together with disciplined monitoring and maintenance, is key to capturing the upside while controlling the downside.